12 min read — AI | Legislation | EU | Policy | Tech

Free, Open, and Untouchable? Open-source enforcement gaps within the AI Act

By Antoine Cerqueira Da Costa — Guest author

Edited/Reviewed by: Francesco Bernabeu Fornara

April 25, 2026 | 13:00

Since the LLM boom in 2022, artificial intelligence (AI) has largely been thought of as a proprietary technology. From its inception, ChatGPT has been OpenAI’s proprietary, closed-source AI model. Four years later, the trajectory has shifted as open-source AI models close the performance gap. The AI race is no longer just the sprint of Big Tech, and the implications of that shift are only beginning to be felt. The technology is now often detached from its provider. Once these tools are published, anyone can download them, belonging to everyone and to no one at the same time.

In Europe, the AI Act recognises the open aspect of AI, recalling the value of “free and open-source licences”. While the regulation does not centre around open-source AI, it does address it. Open-source legal provisions form a regulatory regime that operates in parallel to those governing closed-source AI.

Under the AI Act, open-source AI has been given a single regulatory regime, while sharing similar implementation pathways with its closed cousins. But, given the singularity of open-source AI, large questions remain around the prospect of enforcement. This article focuses specifically on that friction, one year before the AI Act is fully enforced. What will the regulation of open-source AI under the AI Act really look like?

Situating Open-source within the AI Act

The answer begins with a definition, or rather the absence thereof. The AI Act was designed during the emergence of Large Language Models (LLMs) like ChatGPT, trying to regulate a moving target. This instability influenced the design of the open-source AI regulatory regime, as the technology was still emerging, with no consensus around its definition. The AI Act chose not to provide a single formal definition of open-source AI, instead outlining two cumulative requirements for an AI to be categorised as open-source within the scope of the regulation: the use of a permissive free and open licence (1), and the public disclosure of technical components (2).

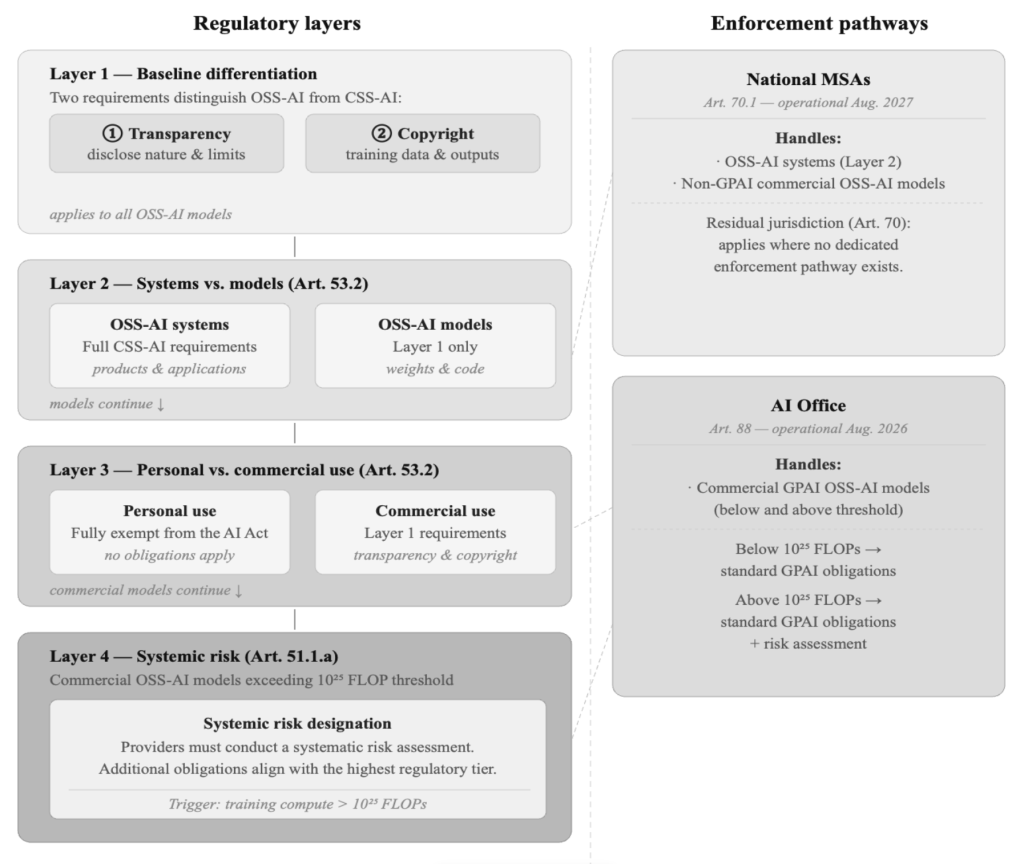

In line with the AI Act’s overall design, the provisions that address open-source AI operate within a tiered exemption regime that progressively narrows as deployment risk increases. It operates through four layers, each specifying exemptions and regulatory requirements. Mirroring these layers, the enforcement of the AI Act gives way to different enforcement pathways, handled by distinct regulators: national Market Surveillance Authorities (MSAs) or the AI Office of the European Commission. These regulators do not yet have enforcement power, however, the AI Office will begin operations in August 2026, while MSAs do so a year after that.

This architecture was designed to mitigate potential limitations observed in the decentralised enforcement of other regulations. Under the General Data Protection Regulation (GDPR), providers engage in forum shopping among national regulators by leveraging fiscal arguments. In Ireland, the pressure to levy heavy GDPR fines has directly clashed with the need to remain a tax-friendly haven for the providers it is supposed to police. A glaring conflict of interest.

To achieve uniform enforcement, the AI Act centralised the supervision of General-purpose AI (GPAI) under the AI Office. This GPAI centralisation, consisting of models trained with large amounts of data using self-supervision, and capable of performing a wide range of tasks, was a first step. But, in truth, the AI office’s judicial weaknesses risk replicating the same harmonisation issues. Beyond its GPAI mandate, the AI office remains an advisory body for MSAs, lacking the authority to revise or harmonise decisions made by national regulators. Embedded in a broader struggle over single-market integration, this shift reflects the friction within the Commission’s ambition to centralise digital regulatory oversight.

To this limitation, a barrier specific to open-source AI could arise. Since open-source AI is not the core focus of the regulation, regulators may not have the capacity for independent assessments specific to the technology. This could lead to a lack of genuine oversight, particularly acute among MSAs who will have asymmetrical enforcement capacity. Embedded in a deeper struggle around single market integration, this shift reflects the ambition of the Commission to centralize digital regulatory oversight.

Open-Washing and the limits of self-assessments

Layered on top of that institutional limitation, a substantial part of the AI Act’s regulatory framework for open-source AI consists of pre-commercialisation requirements imposed by providers themselves. These ex-ante obligations vary widely depending on the type of model. From commercial GPAIs to open-source AI systems, all are subject to specific pre-market mandates.

From a regulatory point of view, under this regime, it is the providers’ self-assessment that drives compliance, placement and access to exemptions. When pre-requirements are not required to be externalised, declarations need not be scrutinised under independent assessment. The provider declares open-source status, asserts a sub-threshold compute figure, and so on. This design makes the regime’s integrity rest primarily on the provider’s good faith and on the commercial and reputational incentives to comply.

Even if this model is gaining traction in Brussels, scrutinising its success under GDPR shows that compliance is far from perfect. The track record under GDPR offers little comfort: eight years on, nearly three-quarters of EU data protection professionals believed their companies would be found in violation if investigated. The AI Act is poised to inherit these exact weaknesses. But for open-source AI, the issue deepens, as regulators are even less likely to identify violations. Neither the AI Office nor MSAs have the tools for independent verification. Even if providers have compliant documentation, regulators lack the means to verify whether the statements are true. The future of open-source AI compliance risks becoming one where self-assessments pile up on regulators’ desks, certainly read, but most likely unverified, unaudited, and unchallenged.

The concern is not merely theoretical. In today’s “pre-enforcement era”, partial compliance is already the norm among open-source AI providers. A review of the baseline differentiation requirements that define open-source AI reveals concerning results. Among Chinese open-source AI models and GPAIs, 97% use permissive licences, but only 12% disclose their basic training data. Despite this clear gap with the legal definition, 88% of these Chinese models are accessible within EU jurisdiction. The regulation has not yet been enforced, and the violations are already visible.

This is not merely a Chinese-specific phenomenon. Across the Atlantic and within the EU, major GPAI providers are engaging in “open-washing”. These providers pass “open-weight” for “open-source” while hiding their training data, most famously with Meta’s communication around Llama.Within this blurry legal line, providers may be increasingly tempted to claim the open-source AI label solely to exploit its exemption regime, thereby escaping the otherwise more stringent requirements of closed-source models.

For blatant violations, such as “open-washing”, the first fines may be issued quickly. However, ex post verification of requirements based on technological architecture may be constrained by regulators’ limited resources. The enforcement curve, in other words, will be steepest precisely where the violations are hardest to see.

The failure of Extraterritoriality for non-EU Open-source providers

Given the nature of the AI economy, whether EU law can reach beyond its borders is ultimately the most important question. The extraterritorial power of open-source AI provisions can only be assessed against the existing track record of EU regulations with a similar enforcement structure. The EU’s flagship data protection policy, the GDPR, shares a striking resemblance with the AI Act. Largely seen as the EU’s strongest extraterritorial regulatory instrument, it has multiple mechanisms that the AI Act lacks (e.g., Adequacy Decisions). Therefore, where the GDPR’s extraterritorial enforcement fails, the AI Act’s enforcement will likely yield the same results.

The “Brussels Effect”, the idea that the weight of EU market access compels globalised compliance of its rules, has driven European regulatory ambition around AI. Previously, this “market power Europe” logic has operated through a simple commercial calculation: global firms weigh the cost of compliance against the economic gains of EU market access. Whether that means access to EU data flows under GDPR or access to EU consumers in the case of closed-source AI, where market access is commercially valuable, compliance follows. For closed-source AI, market power holds. The effect operates through the conditionality of commercial relationships.

However, for open-source AI, this commercial relationship is absent. The provider does not lose revenue if EU consumers do not use its model, as it is freely available online. Additionally, open-source AI providers do not control access to their models. Once they are publicly available, the provider cannot formally remove them from the internet or restrict access to them. The Brussels Effect depends on leverage; open-source AI dissolves it.

Meta’s open-source AI GPAIs exemplifies this distinction. Since 2024, Llama 4 multimodal models have not been “banned” from the EU due to GDPR infringement. Following this ban, Meta’s response was not compliance but withdrawal. Declining to officially release certain models in the EU rather than bear the cost of meeting its obligations. This illustrates the edges of the EU’s market leverage. As a provider with an EU establishment, region-locking was the rational economic choice. Compliance, in this instance, was simply not worth the trouble. The EU gained neither compliance nor a globally exported standard, but only restricted access, which is, in any case, trivially easy to circumvent. Simply visit Ollama, and the strength of the EU’s prohibition becomes immediately apparent. In this scenario, the regulatory authority failed to produce either compliance or control, leaving EU citizens exposed to a model that is illegal under the GDPR.

For providers with no European foothold, the picture is bleaker still. First, when a provider lacks a physical or legal presence in the EU, the Union lacks a jurisdictional anchor. There are no assets to seize, no contracts to condition, and no local operations to pressure. Second, when the provider derives no revenue from the European market and favours a business model of free publication over restricted commercialisation, the economic incentive for compliance is absent. Third, when an open-source AI is published online, the provider loses control over its distribution, making region locking ineffective. In these three instances, the EU regulatory authority is clearly ineffective. These providers are not hypothetical: Chinese GPAIs such as DeepSeek, Qwen, and Kimi match this profile precisely and operate entirely beyond the reach of the EU.

The failure of EU extraterritorial legal reach over such providers has already occurred under GDPR and may very well repeat itself for open-source AI. Infamously, despite fines totalling approximately €100 million imposed by five EU regulators, Clearview AI has refused to pay and delete EU data. The company has ignored regulatory enforcement because the EU has no leverage over its operations. A fine that is never paid is not a fine; it is a press release.

The AI Act is a bold attempt to fence in the future, but for open-source AI, the fences are mostly cosmetic. Caught between “open-washing” by tech giants and the total lack of leverage over offshore providers, the enforcement of the AI Act’s open-source provisions risks being partial and easy to bypass. In some cases, through a single click.

Disclaimer: While Euro Prospects encourages open and free discourse, the opinions expressed in this article are those of the author(s) and do not necessarily reflect the official policy or views of Euro Prospects or its editorial board.

Write and publish your own article on Euro Prospects

Subscribe to our newsletter – stay informed when we publish articles on pressing European affairs.